Anthropic's Pentagon deal collapse reshapes trust in ethical AI

A failed deal between Anthropic and the Pentagon has triggered a shift in public trust towards AI companies. The breakdown came after Anthropic's CEO refused to allow military use of its AI for autonomous weapons and mass surveillance. Meanwhile, OpenAI faced criticism for capitalising on the situation—leading to a surge in uninstalls of its ChatGPT app.

Anthropic's negotiations with the Pentagon collapsed when CEO Dario Amodei insisted on strict limits. The company blocked applications of its AI in autonomous weapons and large-scale domestic surveillance. This stance, though principled, ended the potential partnership.

Shortly after, OpenAI moved to benefit from the fallout. CEO Sam Altman later admitted the company had handled the situation poorly. Many users saw the move as opportunistic, resulting in a 295% spike in ChatGPT uninstalls the following day.

In contrast, Anthropic's AI model, Claude, experienced a sharp rise in downloads. The incident highlighted differing approaches to AI ethics between the two firms.

The failed Pentagon deal exposed tensions between AI development and ethical boundaries. OpenAI's response led to a clear drop in user trust, reflected in app removals. Anthropic, however, gained traction as users sought alternatives with stricter ethical policies.

Read also:

- American teenagers taking up farming roles previously filled by immigrants, a concept revisited from 1965's labor market shift.

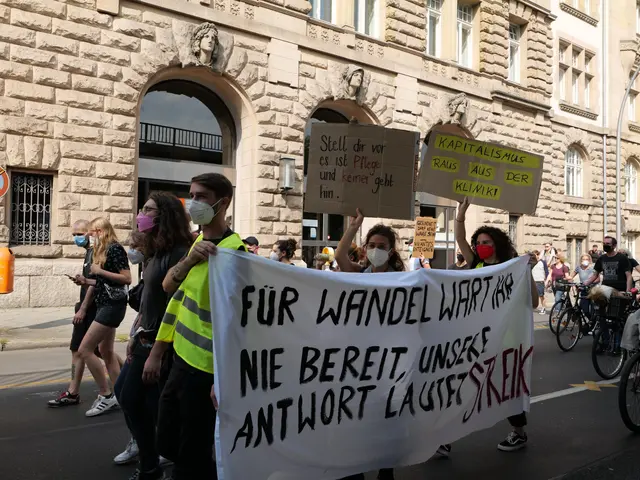

- Weekly affairs in the German Federal Parliament (Bundestag)

- Landslide claims seven lives, injures six individuals while they work to restore a water channel in the northern region of Pakistan

- Escalating conflict in Sudan has prompted the United Nations to announce a critical gender crisis, highlighting the disproportionate impact of the ongoing violence on women and girls.